perhaps. I know my prompts are like three paragraphs long lol. and then I do three our four iterations to clean things up.

When I messed with vibe coding over winter break, the basic approach was to create a really solid requirements document first. You go back and forth with the AI about what you want for a minimum viable product, appropriate data structures, the order of the code, and how to test it. And you check repeatedly if anything is missing or should be rearranged, before asking it to build anything. It’s basically the same approach that a human team should use. Then while the AI builds, it can reference the plan, and you can iterate.

I’ve read complaints about AI that it means young people won’t learn to think and communicate in an orderly and logical manner.

It seems to me that writing out lengthy “prompts” for AI requires thought and accurate communication.

I’m so old that I worked with actuaries who wrote “specs” for programs that programmers would write. AI prompts for larger jobs sound similar.

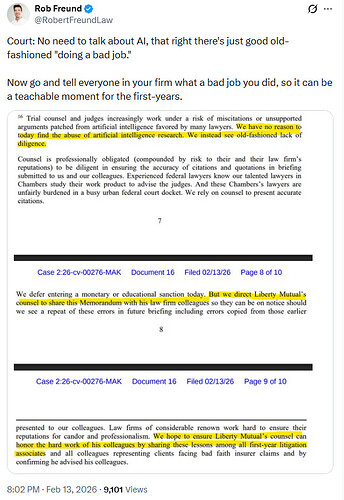

by the way, that was a claim dispute with Liberty Mutual.

It was Liberty Mutual’s lawyers who were sloppy

I haven’t been following humanoid robots, so this is new to me.

It is impressive.

Looks like we now have the ability to run four different AI models at the same time via the same prompt pathway.

This is a good idea. Will cut down on the weaknesses of each of the models in specific areas.

Perplexity - Claude - ChatGPT - Gemini

So we use AI to run AI to get better results?

I just saw this:

Distressing.

I’ve been treating AI like my assistant at work rather than trying to outsource any key parts of my job. It’s been going pretty well.

Before a meeting, I’ll have it come up with topics I should prepare for or questions I should ask. I’ve provided it with pitch decks and asked it to break it down for me to the key components. This has allowed me to focus the conversation on what matters most.

I have asked Copilot to remember some key metrics I am evaluated against and will have it connect those things to my meetings or projects. I’ve used it to go through my email to help me write my year end self evaluation. I’ve had it analyze my calendar to identify areas I can be more efficient.

Is it groundbreaking? Not at all. Is it making me more focused in my role? Yes. I’m not asking it to make a strategic decision, I’m still owning that. But I have more time to do that stuff now.

Can it write emails and Teams messages responding to my boss’ idiotic questions and requests?

Automatically, I mean. So I never have to actually see or read them.

Something tells me that you ought to read/edit anything from AI before sending it to someone with power over your paycheck . . .

You could include in the prompt - “Write it in the style of a straight shooter with upper management written all over them”

The more I hear AI execs speak, the more I think they just dislike people in general

I struggle to understand why people listen so much to Sam Altman. He’s not particularly honest and forthcoming, he’s not super-intelligent, and he’s not a cult of personality like Musk. He’s just the guy who happens to be in charge. Kind of like Zuckerberg, except nobody cares about Zuckerberg?

Sometimes i think we have to suppose the uber rich are also uber smart because it justifies our belief that we live in a meritocracy.

I think it peeves me in particular because the AI world is loaded with actual geniuses. Some of them are uncharismatic, but Sam Altman also isn’t charismatic.

Open AI being called in for a chat with the AI Minister.

https://www.cbc.ca/news/politics/open-ai-summoned-ottawa-tumbler-ridge-9.7103281

I get they’re in an awkward spot here where they have to balance between privacy and protecting the public. I’m not really sure where the line is on this.