In a talk by Hannah Fry (Hannah Fry - Wikipedia)…

Reminds me of a story I read a long time ago (no link of course). It was a variation of hide the pea under one of three shells, mix them up, challenge bettor, game.

In this case the shells were aluminum foil pans that might have come from small frozen pot pies. The contestants were chickens who did remarkably well, solving cases where humans just lost track.

The catch was that the pea always went under the same pan. There were small imperfections on the pans that humans didn’t notice, but stood out to the chickens like big red X’s, because chickens’ survive by seeing tiny seeds and insects.

Have been given access to Perplexity Pro via my Revolut Account and its pretty decent for normal day to day use (aggregating information into summary format).

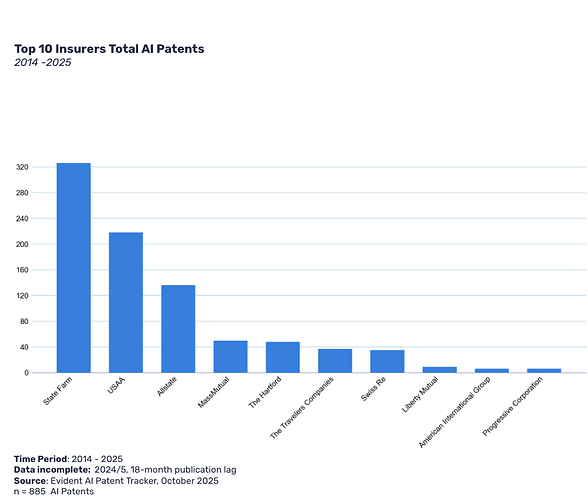

I assume the article is focused on US patents, even though Evident has a broader scope in its insurer/reinsurer surveys.

Unsurprised this happened in Louisiana. Some boys created AI nudes of girls in their school. The girls who were subject to this complained to the guidance counsellor. Adults at the school couldn’t find evidence of the pictures, principal decides the girls were lying, making it up. Boys are sharing the photos on the bus. One of the girls starts a fight. She gets expelled, nothing happens to the boy.

Eventually police charge two boys under state law.

I’m somewhat surprised mutually assured destruction hasn’t caught on yet. If you make one of someone, they can easily do it to you…

Time to give a couple of people a micropenis…

These jerks all stole my brilliant idea to use AI to do things. I should sue.

AI messes up sometimes;

“Cape Breton fiddler Ashley MacIsaac had a concert cancelled and is worried for his safety after he says Google incorrectly described him as a sex offender in an AI-generated summary last week.

He was arranging to play a concert last Friday at the Sipekne’katik First Nation a little north of Halifax when he learned that its leadership had changed their mind. They had read online, Mr. MacIsaac was told, that he had convictions related to internet luring and sexual assault.

That information is not true, and was later revealed to have been the result of Google’s AI-generated search summary blending MacIsaac’s biography with that of another man, who appears to be a Newfoundland and Labrador resident bearing the same last name.”

Wow. I’m back to ‘never ask AI to answer a question that you don’t already know the answer to’.

There’s a big fisheries guy in Nova scotia that shows up with the same name as me but I don’t think he’s a criminal so, so far so good.

So there’s a recent reddit thread talking about how united healthcare in the US is using AI to autodeny claims and clearly getting a lot of them wrong.

Which makes me wonder if we can ‘guarantee’ results using AI in insurance, i.e. in underwriting. I have a rudimentary understanding of how I think guardrails work, but lets say: “we want to underwrite life insurance policies, and guarantee that we don’t have any systemic bias that denies people. “. Not sure that’s possible (I’m also not sure it’s not not possible. I just don’t understand enough to have an opinion).

Booking an appt. with my AI PhD guy to ask him about this.

As I understand it, the fundamental challenge is this: these (LLMs) are statistical models trained to predict the next word(s).

However, we want the “right” answer. This may not be the most probable set of words.

A related complication: the most probable next word will depend on the context. But a lot of context is never written down. Even if it is written down somewhere, it might not make it into the prompt. And even if it makes it into the prompt, it might not be given enough weight.

I was going to say that this is true of LLM’s, not AI in general and united wouldn’t use an LLM for this. Then I gave my head a shake, and of course they’re using an LLM. That’s, holy cow, well lets just say Free Luigi.

I just assumed it was an LLM.

It seems we need more regulation on this, doesn’t it.

Well I’m not a fan of regulation but………. something.

I would argue that at least in the actuarial world, this type of use of AI is already regulated. There’s no way anyone actuarial should be approving or invovled in the type of use that United healthcare is doing with LLM’s, I’m sure that basic requirements of the credentials would prohibit the use of this stuff with guardrails that wide.

So maybe just a paper from the SOA/CAS saying ‘here’s how you apply your requirements to the USE of AI/How to interpret’.

Jeff Hutchings? Boris Worm? Ransom Myers? Or are you talking fish harvesters and not scientists?

I would have thought they’d use a purpose built AI or machine algorithm to do this.

If I was the one at United Healthcare doing this, we’d be collecting a set of attributes for each claim of a particular type (e.g. patient age, sex, medical parameters, etc. and whether the claim was approved or disapproved) and then investigate the use of K nearest neighbour analysis, support vector machines or regression trees to fit a model that would either automatically approve or deny the claim. I would not be using a LLM for this. Perhaps for better performance, you’d have a review panel go over each claim being used in the model building process to make sure the right decision was made (e.g. you aren’t messing up your data set with a bunch of poorly made decisions or erroneous decisions that got reversed.

First: i don’t think we really know what they are doing, right ? So this reflects my worry about how LLMs might be used as much as anything.

My guess is that using the kind of approach you mention would be very hard. It would probably take may different models trained for different kinds of claims.

My impression is that this is the way these LLMs are sometimes being sold: with the claim that the model already knows. It has been trained on huge amounts of data, and within a few years will be a super intelligence, smarter than any human who has ever lived. Simply give it the right prompt, and it can “reason” its way to the right answer.

I think the difference fish guy is saying is that to be done right, this needs it’s own mathematical model, and not an llm. generating a new model vs using an llm are two entirely different things (unclear if people are aware of this or not).

imo llms are great when you need something to read and speak. but leave out the thinking part.